SUCCESSFUL STORIES

Machine Learning

Artificial neural networks (ANNs) are used for modeling complex relationships between inputs and outputs or to find patterns in data. Therefore, they have a wide application in sciences and engineering, such as data mining, electric load forecasting, industrial process control, hand-written word recognition, and disease diagnosis.

Training of ANNs is the process to find the best architecture and parameters for the network. Due to its inherent nonlinearity, training is essentially a difficult global optimization problem with many local optimal solutions. In deciding the architecture for ANNs, a large network usually provides better approximation accuracy on seen (training) data, however, at the cost of generalization capability for the unseen (testing) data. Ensemble offers an effective way to improve generalization capability.

Our TRUST-TECH has been applied to construct a highly effective platform termed ELITE for high-performance ensemble of ANNs. Multiple criteria used in the design of ELITE, including optimal members, improved member diversity, and optimal member combination, are achieved through a multi-staged optimization framework, where the TRUST-TECH methodology plays a central role.

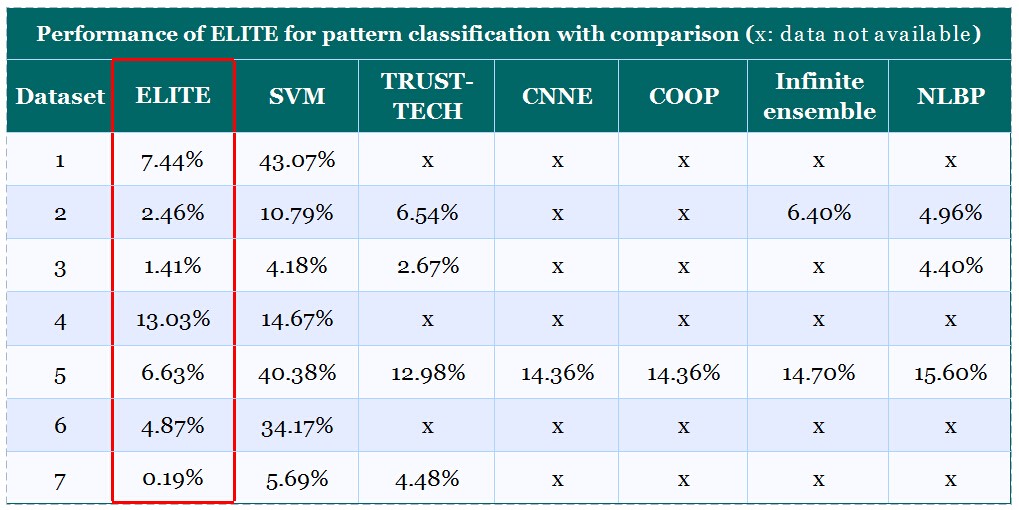

The performance of our TRUST-TECH based ELITE platform has been evaluated for pattern classification on benchmark machine learning datasets. Compared with other popular methods, the results have showed that ELITE achieved the best performance.